Situational Awareness: Unifying Multiple Data Sources into a Single Operational Picture

Designing collaborative spatial systems that enable real-time planning, coordination, and decision-making.

As Creative Director and Technical Program Manager within Microsoft Services’ Mixed Reality Studios, I led customer engagements, shaped business development strategy, architected UX systems, and guided product direction for immersive geospatial platforms that unified multi-source data into shared operational views across public and private sector environments.

The Challenge: From Fragmented Feeds to Unified Understanding

Operational teams often rely on disconnected data streams:

ISR feeds

Geospatial intelligence

Air and ground asset tracking

Communications metadata

Environmental overlays

Approval chains and command workflows

The challenge was not just visualizing data in 3D, it was constructing a framework that allowed distributed teams to:

See the same operational workspace.

Interact with it collaboratively.

Maintain role clarity.

Move from Identification → Planning → Execution → Assessment

Spatial Design for Situational Awareness

Our studio focused on building spatial systems for situational awareness, platforms that translated complex geospatial, intelligence, and asset data into shared operational understanding.

In this work, I operated at the intersection of business development, operational strategy, UX architecture, and engineering execution, ensuring that exploratory concepts matured into scalable capabilities deployed across multiple operational environments.

An early product initiative was transitioned to our studio for exploration and expanded beyond a standalone prototype into a scalable capability model implemented across diverse organizational contexts.

Delivering these capabilities required aligning stakeholder objectives, defining structured spatial workflows, and guiding cross-functional engineering teams from concept validation through deployment.

Key Responsibilities:

Partnered with stakeholders to define measurable operational objectives.

Translated mission requirements into scalable spatial capability models.

Defined collaborative spatial workflows across varied mission contexts.

Expanded a single product initiative into multiple tailored implementations.

Aligned cross-functional engineering execution from prototype through production.

Maintained architectural continuity across deployments spanning distinct operational environments.

Architectural Framework for Mult-Source Data Integration

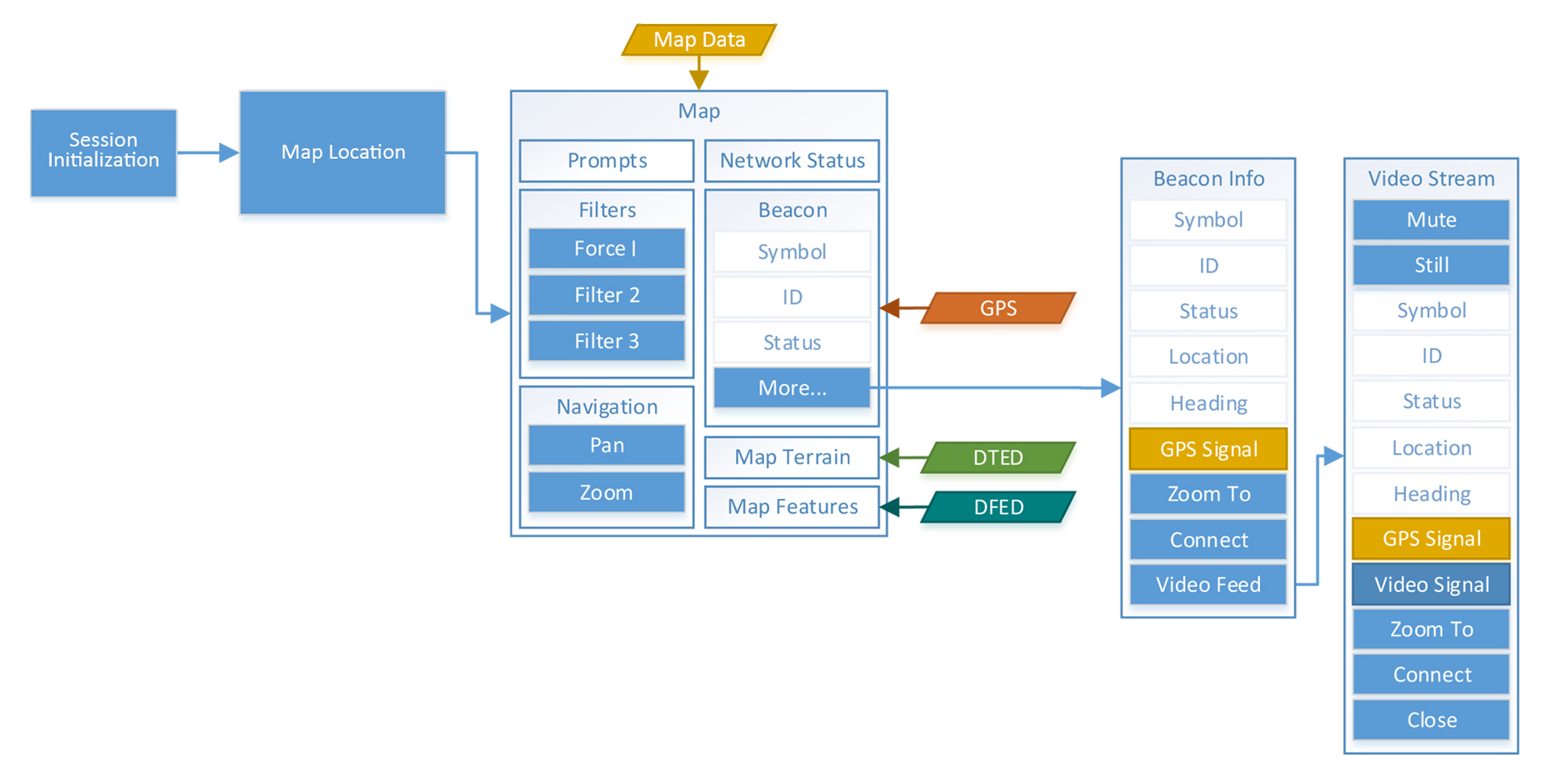

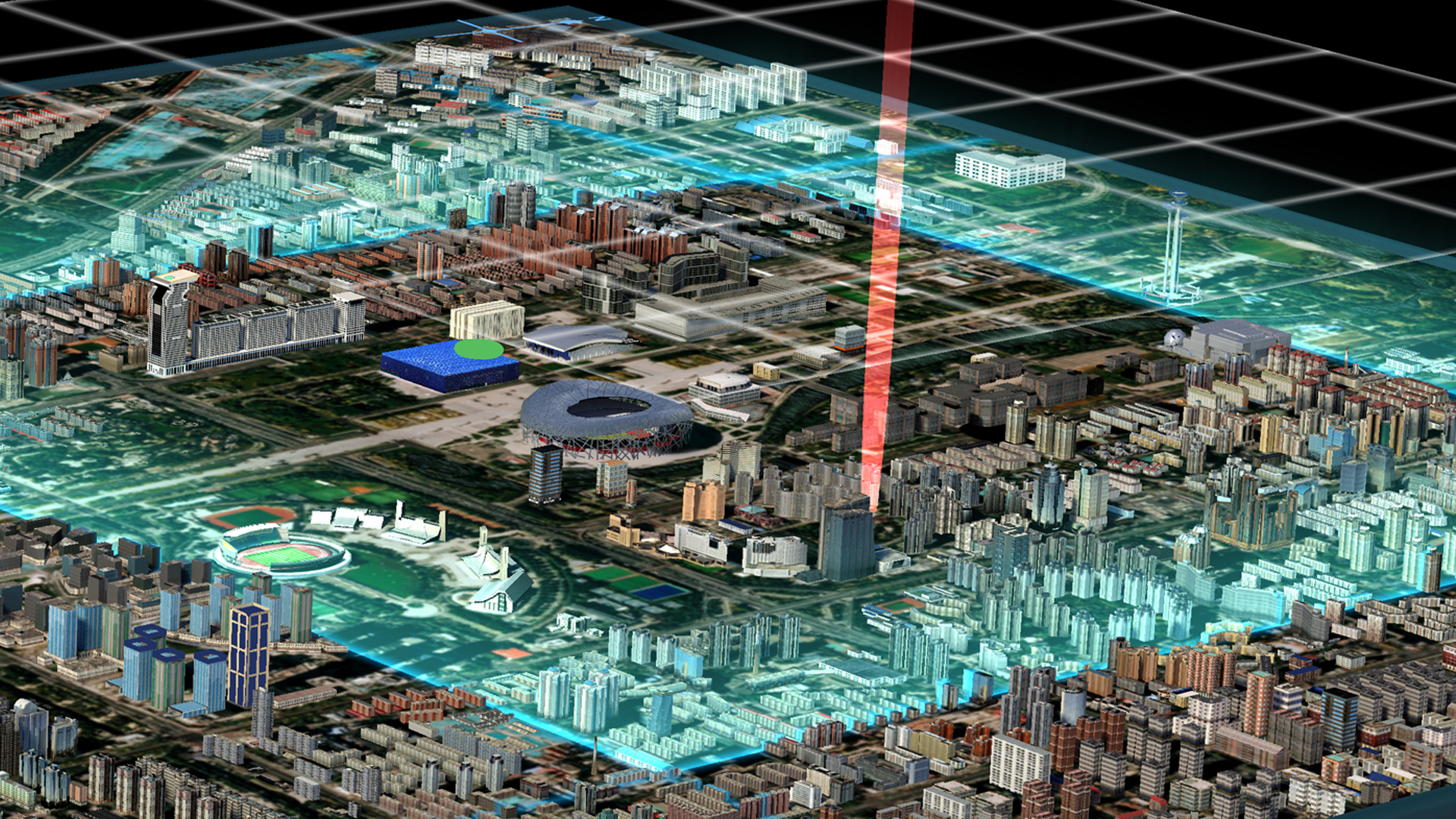

The technical foundation behind these capabilities was a modular spatial architecture designed to ingest, normalize, and synchronize heterogeneous operational data within a shared environment.

Rather than a static visualization layer, the framework functioned as a live operational model, integrating intelligence feeds, asset states, infrastructure overlays, and workflow status into a persistent spatial context accessible to distributed users.

Core Framework Capabilities:

Ingestion and normalization of live geospatial, sensor, and intelligence feeds.

Layer-based geospatial visualization across dynamic data sources.

Role-aware interface states within a shared persistent spatial model.

Event-driven data synchronization across distributed users.

Integration of asset readiness, approval workflows, and engagement states.

Configurable capability modules enabling scenario-specific adaptation.

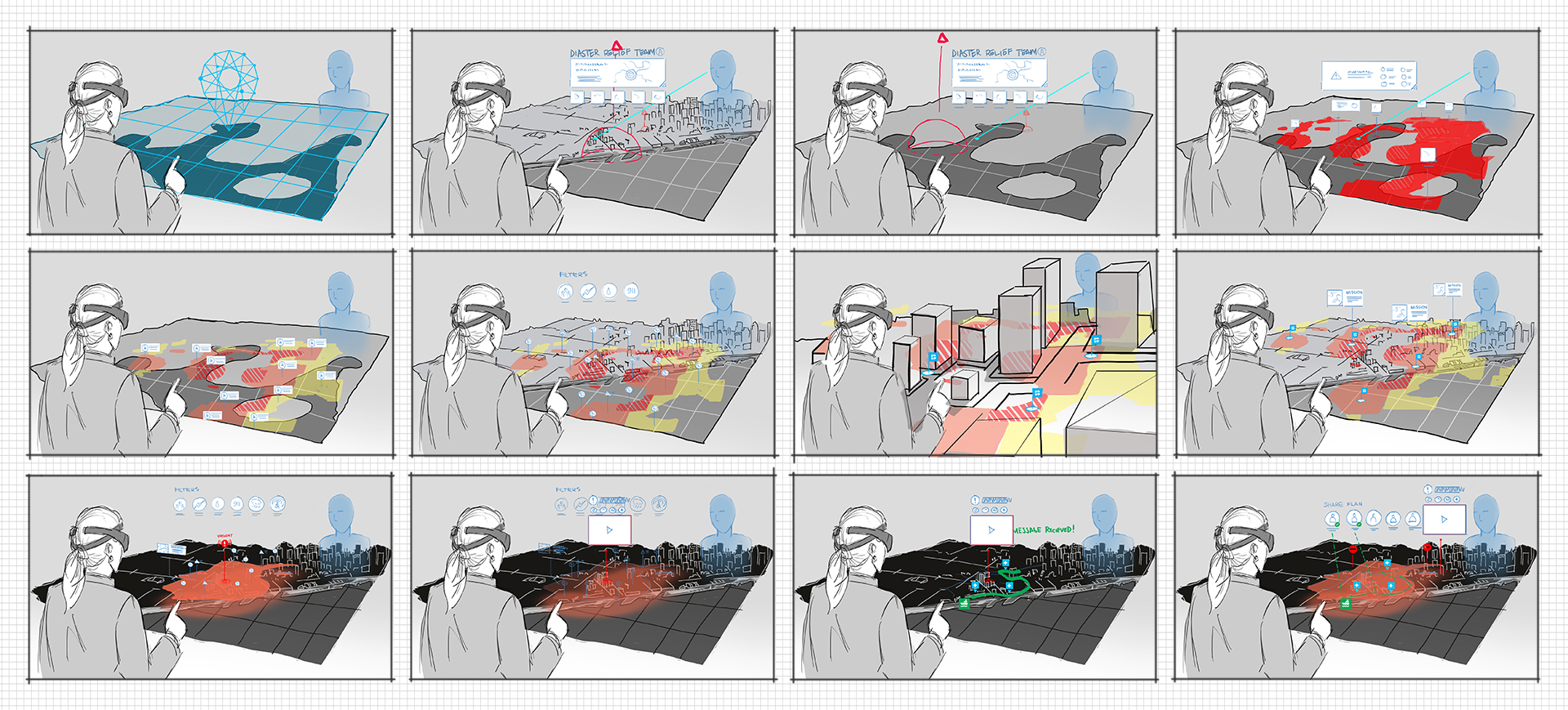

Designing Collaborative Spatial Workflows

Traditional 2D command interfaces fragment spatial reasoning across dashboards and monitors. This work restructured interaction around a shared spatial model, enabling coordinated, multi-role decision-making within a persistent 3D operational environment.

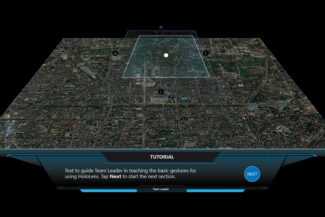

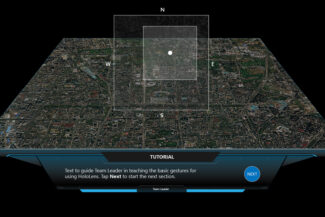

Interaction patterns were structured to preserve spatial continuity while enabling deliberate operational progression. A compass-driven navigation model established bounded spatial control within a shared operational frame, constraining panning, reinforcing orientation, and supporting progressive focus.

Core spatial workflow capabilities:

Shared spatial model synchronization across users.

Compass-driven navigation and orientation control.

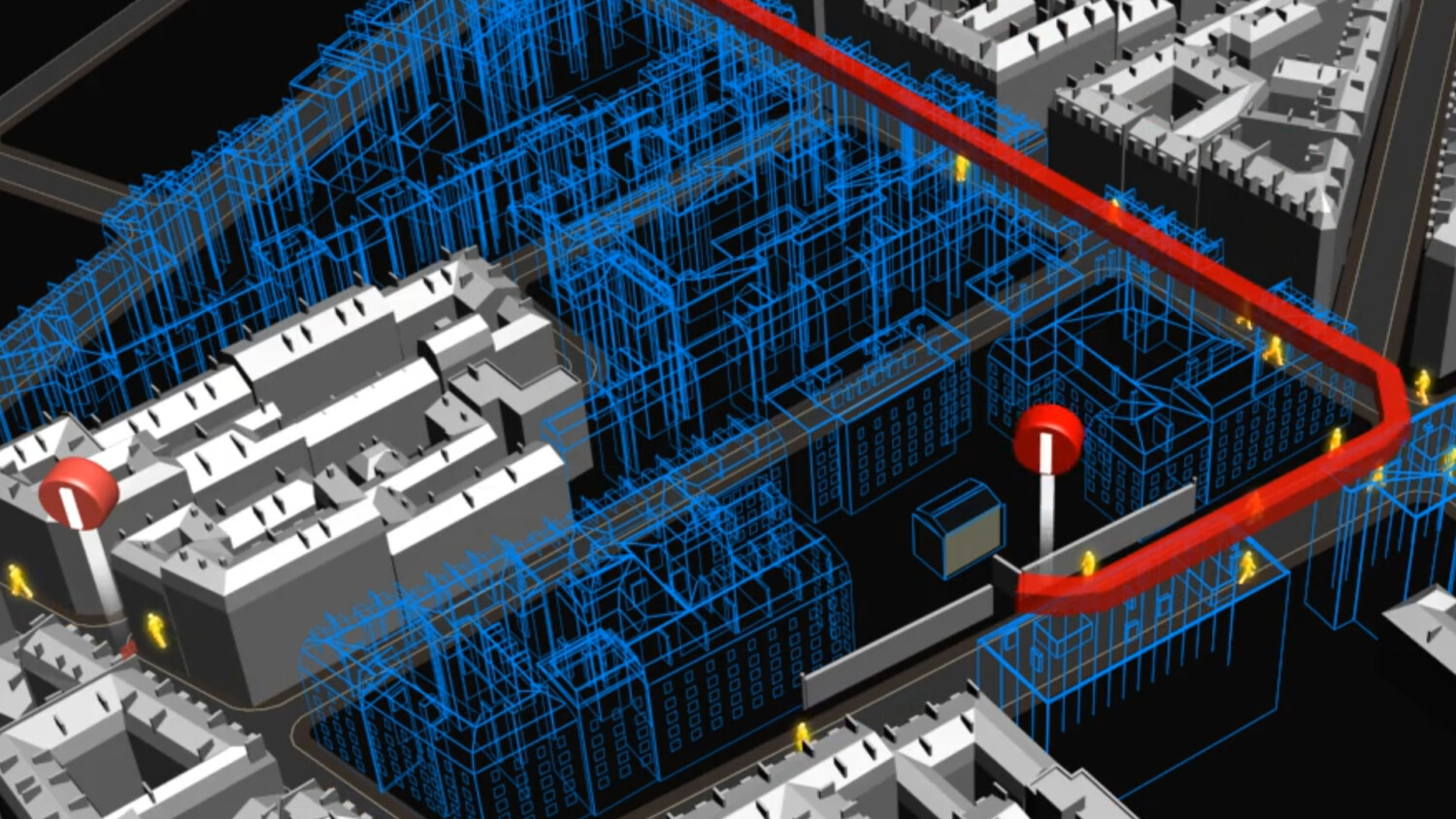

Waypoint and route planning within bounded spatial frames.

Role-based interaction gating and task sequencing.

Multi-user gaze and cursor awareness.

Progressive spatial framing to reduce cognitive load.

Prototyping & Validation

Capabilities were pressure-tested through iterative prototyping across varied operational scenarios. Spatial clarity, workflow pacing, and interaction sequencing were stress-tested under dynamic mission conditions to ensure consistent system behavior as complexity increased.

Validation focused on constraint enforcement, overlay hierarchy, and route logic under dynamic operational inputs, confirming that navigation, asset movement, and workflow progression remained coherent within a bounded spatial model.

Core Areas Refined Through Iteration:

Constrained panning and zoom within spatial framing rules.

Day vs. night legibility across layered geospatial states.

Overlay hierarchy and visual dominance calibration.

Dynamic route planning and path validation logic.

Ingress and egress modeling within mission-defined boundaries.

Impact & Platform Leadership

This work established mixed reality as a viable medium for command-level operational planning across distributed teams. By replacing fragmented multi-monitor workflows with a bounded spatial model, the system reduced cognitive load while preserving contextual clarity during complex decision-making.

Beyond a single deployment, the initiative defined a reusable spatial interaction framework, enabling immersive planning, asset coordination, and workflow progression within a structured 3D environment. The architecture demonstrated scalability across varied operational contexts and expanded product exploration across multiple organizations.

This was not a standalone prototype. It represented platform-level spatial system design. The effort required translating operational intent into scalable spatial architecture, aligning business development, UX strategy, and engineering execution, and hardening interaction patterns into repeatable capability frameworks suitable for mission-critical environments.

It reflects a mission-first design approach: building spatial systems that remain coherent under pressure and scale beyond their initial use case.

All locations and operational details are fictitious. Specific customers are intentionally omitted due to sensitivity.

My Role and Areas of Contribution:

Product & Strategy

Vision

Align business goals, user needs, and technical realities into clear direction.

Experience & Interface Design (UX/UI)

Design intuitive, scalable experiences balancing usability and aesthetics.

Application & System Design

Design within real engineering constraints and sustainability.

Creative & Design Leadership

Set direction, mentor teams, and maintain quality through delivery.

Spatial & Environmental Design

Design environments where space and motion guide behavior.

3D Visualization & Prototyping

Utilize 3D to test concepts, align stakeholders, and reduce overall risk.